- February 28, 2024

- Posted by: Geetha S

- Categories: Airbyte, Snowflake

Introduction:

Airbyte, one of the leading open- source platforms facilitating data integration and ELT processes, is designed to simplify the process of moving and managing data across various sources. With its user-friendly interface and extensibility, Airbyte enables seamless data integration, making it an ideal choice for businesses seeking efficiency and flexibility in handling diverse datasets.

Snowflake is a cloud data platform that offers a single, integrated solution for a data interchange, data lake, and warehouse. Snowflake offers high performance, security, querying structured and semi-structured data at any scale.

This blog details the steps involved in loading a CSV (Comma Separated Values) file hosted on Google Cloud Storage (GCS) into Snowflake using Airbyte.

Prerequisites:

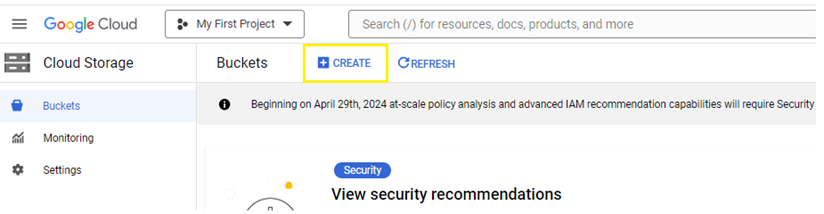

- Create a storage bucket in Google Cloud Storage. Go to Cloud Storage -> Buckets and click on +create.

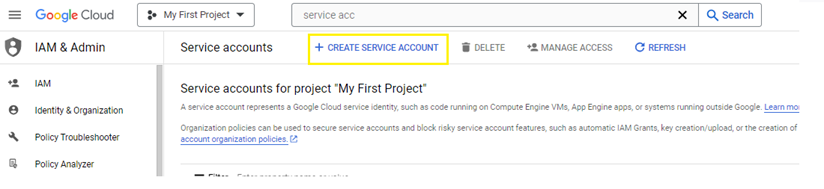

- Create a Service Account in GCP. Go to IAM & Admin -> Service Accounts and click on +create service account.

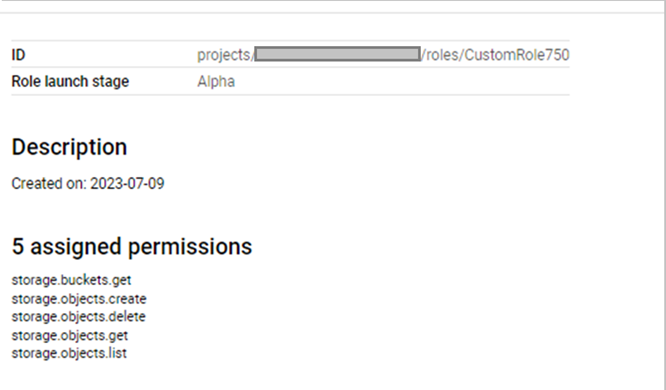

- Create IAM role with object access permissions and assign this role to the service account.

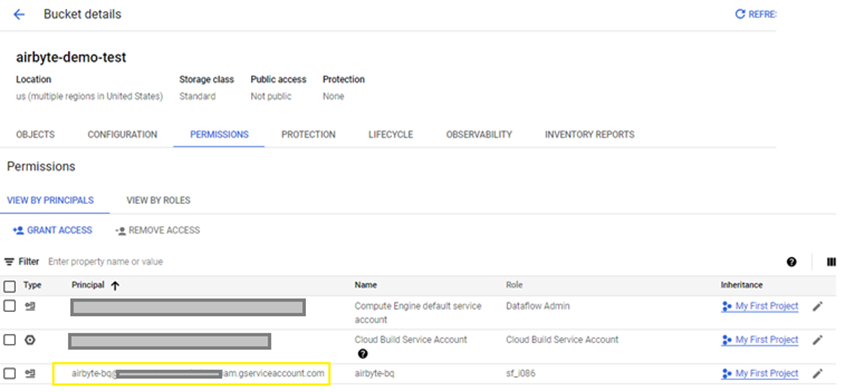

- Add this service account to GCS bucket permissions.

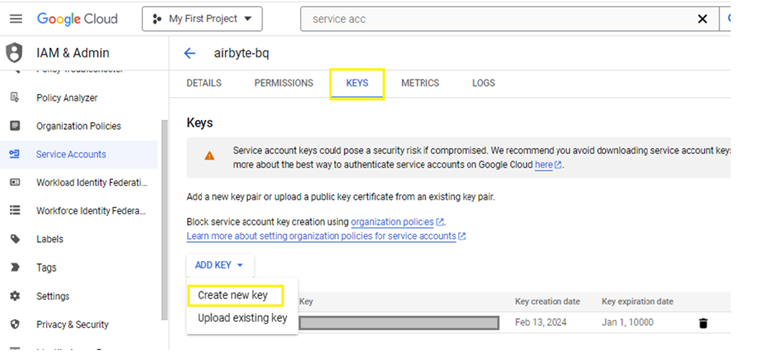

- Create an authentication key. Click on the service account and go to ‘keys’ option and click on Add Key -> Create New Key.

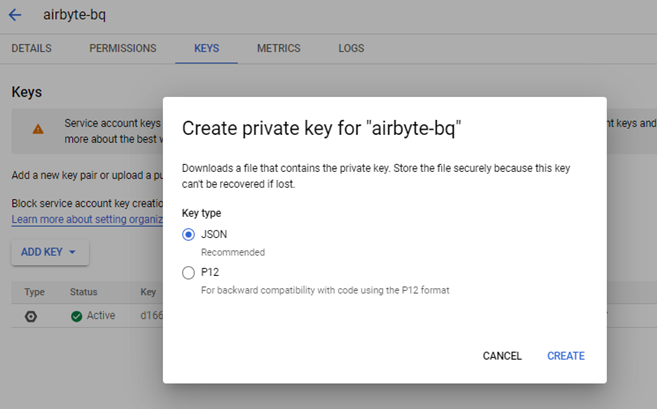

- Generate the key in JSON format and download the file when it is created.

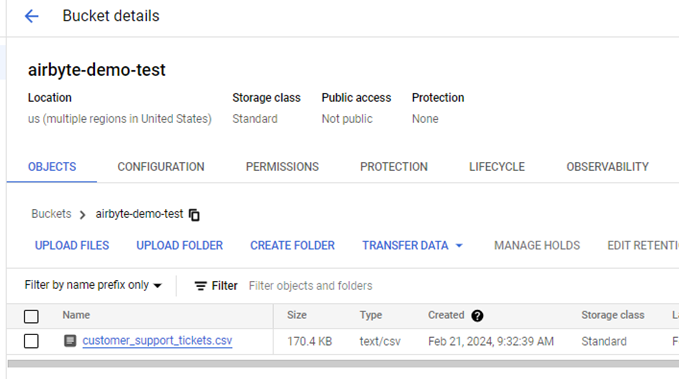

- Upload the csv file to be loaded in the bucket.

Setup Data Integration:

Step 1: Set up the source connector in Airbyte.

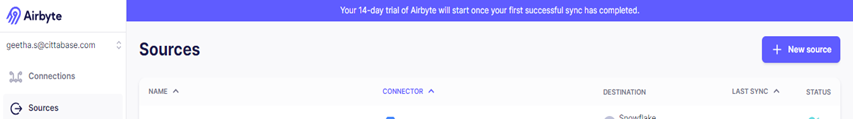

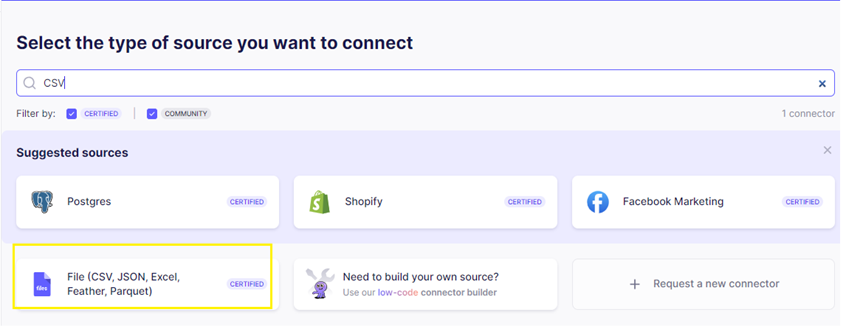

- From the Airbyte UI, click the Sources tab, then click + New source

- Select Files (CSV, JSON, Excel, Feather, Parquet) from the list of available sources.

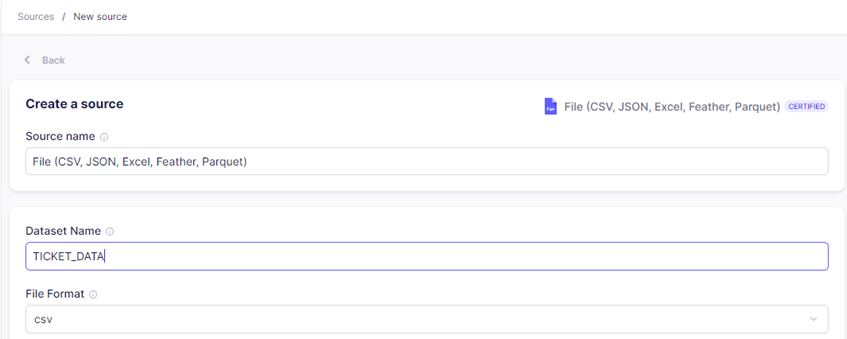

- For Dataset Name, enter the name of the final table into which the data is to be loaded. In this case TICKET_DATA. For File Format, select the format of the file to replicate from the dropdown menu. In this case – csv.

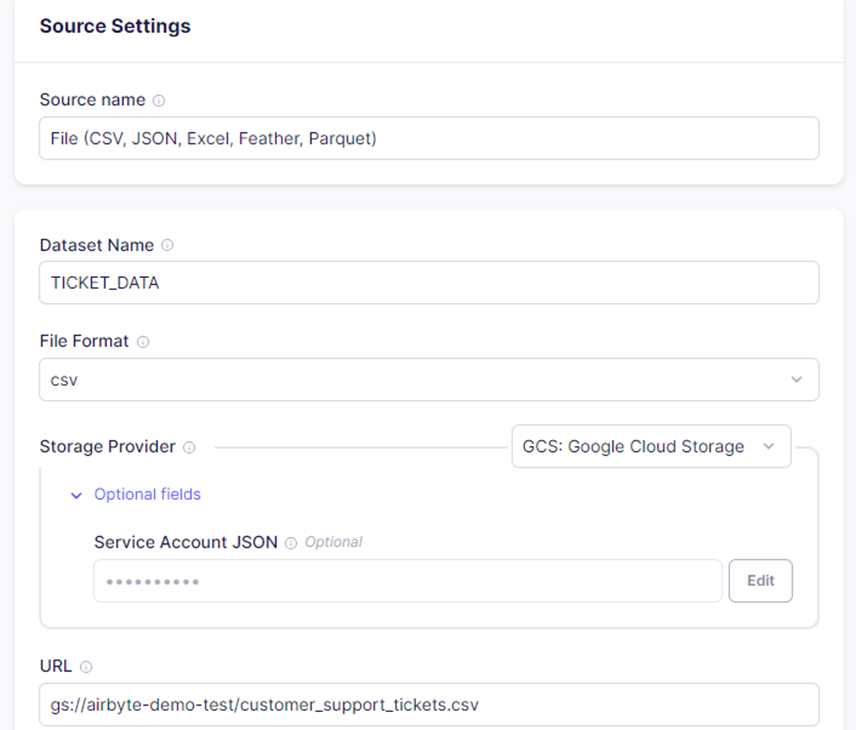

- For Storage Provider, use the dropdown menu to select the Storage Provider or Location of the file(s) which should be replicated, then configure the provider-specific fields as needed. Here the csv file is hosted on GCS storage bucket. Provide the complete information from the authentication key file created for the service account in ‘Service Account JSON’. For URL, enter the URL path of the file in GCS, which is to be replicated and click Set up Source.

Step 2: Setup destination connector.

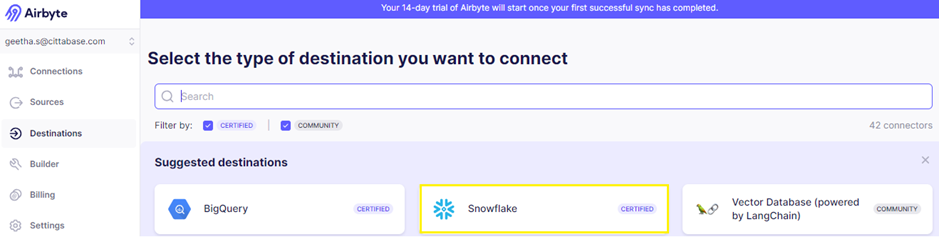

From the Destination tab, + New destination and select snowflake from the list of available sources.

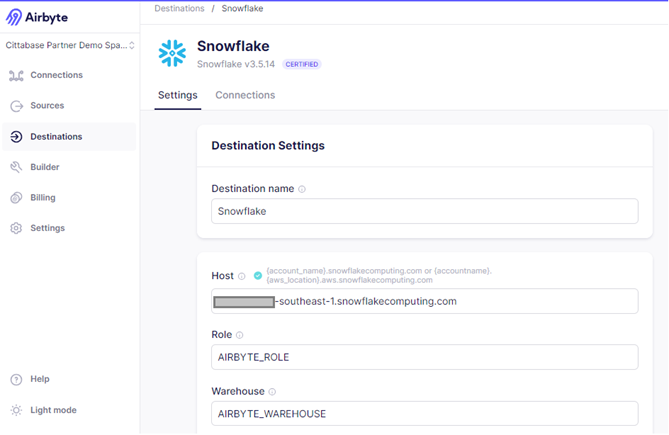

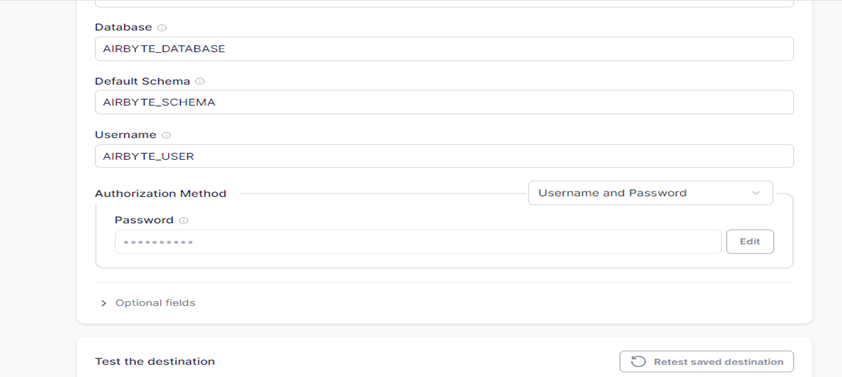

Step 3: Set up Snowflake as a destination in Airbyte.

Enter the details of account identifier in the Host, Role, Warehouse, Database, Schema and User credentials. Make sure that the user has OWNERSHIP permission to write data into Snowflake, track costs pertaining to Airbyte, and control permissions at a granular level. After configuring, test the destination connector.

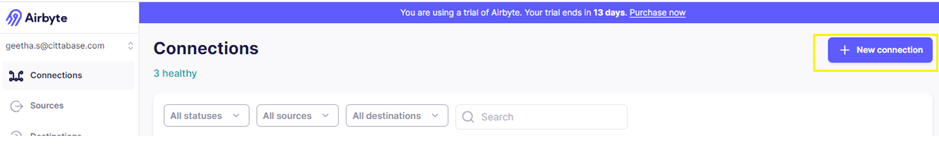

Step 4: Set up Connection.

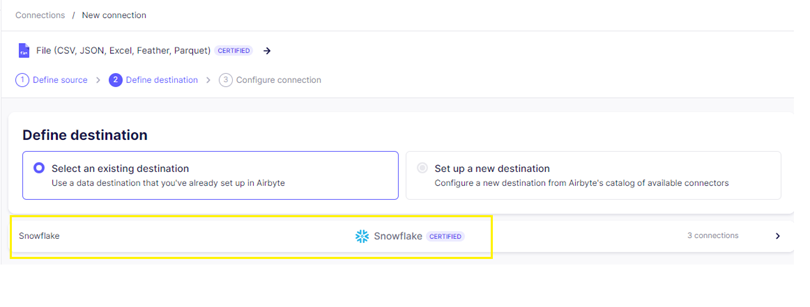

- Click the new connection in Connection tab.

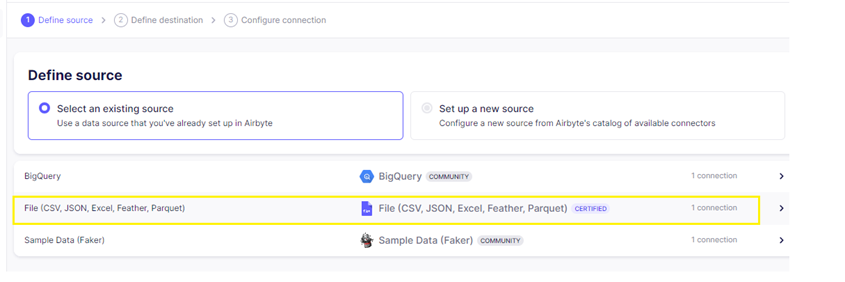

- Choose the csv source connection created.

- Choose the snowflake destination connection created.

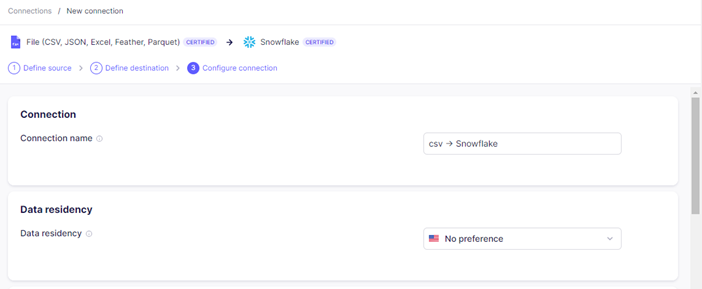

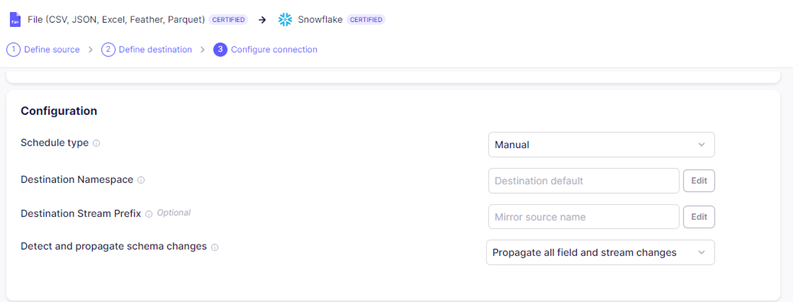

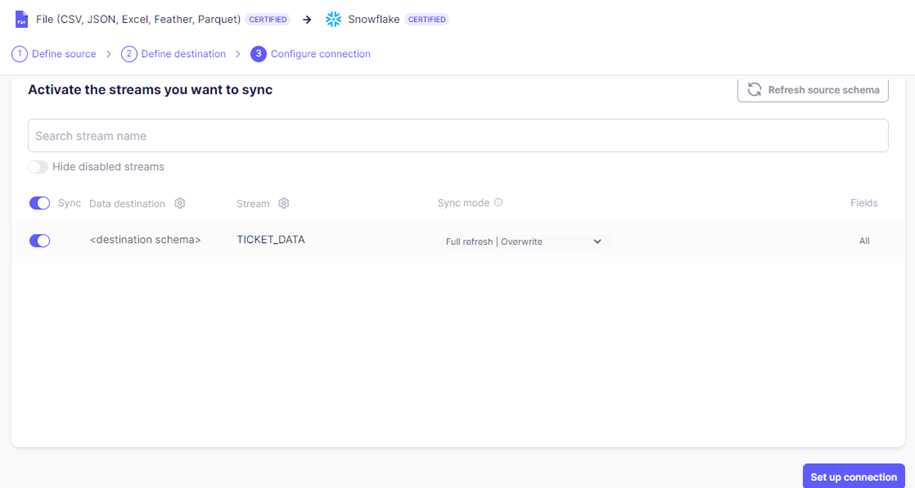

Step 5: Configure the connection.

- Enter the preferred connection name and data residency.

- Enter the preferred Schedule type, Destination Namespace, Destination Stream Prefix and Detect and propagate schema changes. Destination Namespace is the target schema into which the data will be replicated.

- Enable the stream which needs to be synced and click set up connection.

Step 6: Sync CSV file data from GCS to snowflake:

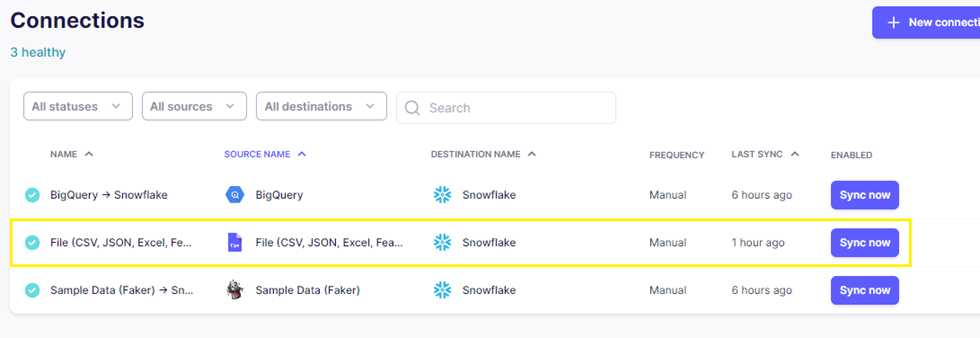

Select the connection created and click ‘sync now’ to let the connection sync data from the csv file to snowflake.

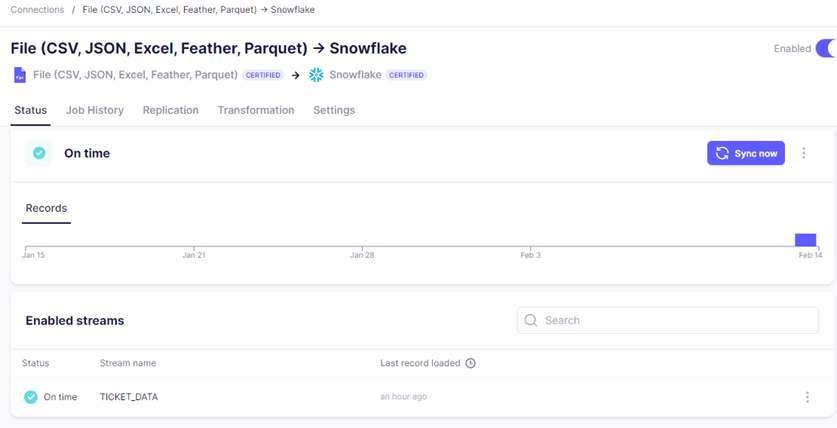

Step 7: Check the status of the job.

- Check the status of the connection.

Step 8: Verify data in snowflake.

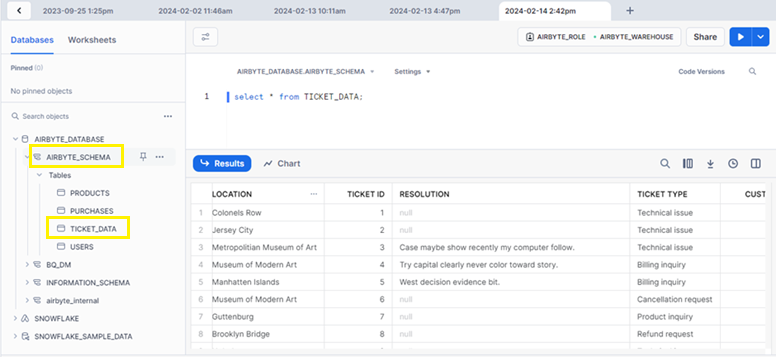

A new table TICKET_DATA is created under destination schema (AIRBYTE_SCHEMA) which is defined in destination connector(Snowflake) and data from csv file is loaded into the table successfully.

Conclusion:

By following these steps, one can successfully establish connection in Airbyte to sync data from a CSV file hosted on GCS into Snowflake.

Cittabase specializes in modernized data platform implementations and is a proud partner with both Snowflake and Airbyte. We offer a range of services designed to meet your data integration and migration requirements. Please feel free to reach out to us.